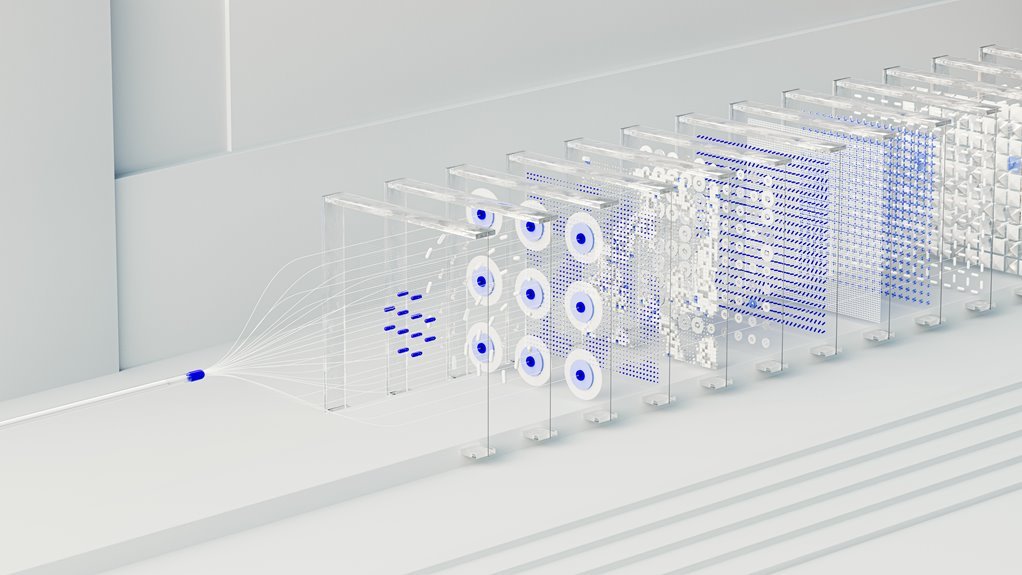

Hyper Node 931815261 Neural Prism

Hyper Node 931815261 Neural Prism presents a modular framework that blends neural processing with autonomous, interoperable components. It orchestrates edge and cloud tasks based on data locality, latency, and available resources. On-device inference pairs with coordinated cloud tasks to optimize performance and privacy. Real-world deployments show measurable gains in latency and resource utilization. The architecture notes outline setup, training discipline, and deployment constraints, inviting practitioners to assess integration points and potential trade-offs before proceeding.

What Is Hyper Node 931815261 Neural Prism?

Hyper Node 931815261 Neural Prism refers to a conceptual framework or product line that integrates advanced neural processing with a modular, prism-like architecture. It delineates capabilities, components, and interfaces without prescribing constraints.

Edge computation enables on-device inference, while cloud orchestration coordinates distributed tasks. The structure supports scalable, flexible deployment, ensuring autonomy, interoperability, and controlled scalability across diverse environments.

How Neural Prism Balances Edge and Cloud Compute

The Neural Prism framework balances edge and cloud compute by distributing tasks according to data locality, latency constraints, and resource availability. Task placement favors edge efficiency when data locality and latency demand quick responses, while complex aggregation and model updates leverage cloud coordination for scalable processing. This approach minimizes round-trip delays, preserves bandwidth, and sustains responsive, flexible inference across diverse environments.

Real-World Use Cases and Performance Gains

Real-world deployments of Neural Prism demonstrate measurable gains across diverse scenarios, from manufacturing floors to autonomous fleets.

Observed improvements include faster inference at the edge, reduced latency, and tighter resource utilization.

Edge optimization strategies enable responsive decision-making closer to data sources, while data locality preserves context and privacy.

Results span predictive maintenance, real-time routing, and adaptive control without compromising reliability.

Getting Started: Architecture, Training, and Deployment Notes

Architecting Neural Prism begins with a concise overview of its core components, signaling how architecture choices influence training routes and deployment constraints observed in real-world deployments.

The section outlines modularity, data pipelines, and compute orchestration, emphasizing edge federation and latency optimization.

It presents clear steps for setup, training discipline, and deployment considerations, preserving autonomy while ensuring scalable, interoperable, and resilient practice.

Conclusion

Hyper Node 931815261 Neural Prism threads computation like light through a prism, splitting tasks across edge and cloud with precision. It maps data locality to latency, orchestrating autonomous, interoperable components that glow brighter under real-time demands. The architecture balances on-device inference with cloud collaboration, yielding tighter resource use and faster decisions. In practice, teams weave resilient, adaptive systems—transparent, scalable, and privacy-conscious—where predictive maintenance, routing, and control converge into a cohesive spectrum of capability.